I’m intrigued by ChatGPT and all of the AI systems that are showing up nowadays. I’m a math teacher, however, and can’t help wonder; does AI get the answer right? I’ve read about “hallucinations” and worry that if I use it to produce worksheets, etc, they might have errors!

We should probably start with the idea that AI isn’t going to replace you as a teacher, but rather supplement what you do and allow you to be more product, efficient, and hopefully less overworked. We humans still have to read through what the modern generation of so-called Generative AI tools create for us. AI software like ChatGPT, Bing Chat, and Google’s new Gemini (formerly Bard) system communicate with enormous confidence, even when what they’re sharing is incorrect.

Shortcuts: The OJ Problem | Flying Intercept | AI Math Worksheets

Of course, humans get things wrong all the time, sometimes out of ignorance, sometimes out of a deliberate skewing of the truth. Since AI systems are developed by analyzing enormous amounts of information produced by humans, it’s inevitable that it too can be led in the wrong direction without knowing that “source A” is reliable but “source B” is biased and highly skewed in its analysis. As one simple example, while there are many people who would say that medical recommendations from the US Centers for Disease Control is accurate and trustworthy, there are also others who would say that it’s suspicious and view it with skepticism. How can an AI capture both perspectives?

You’re now wondering how this relates to something as objective as mathematics. The answer is that more likely than not, tools like ChatGPT are answering math queries based on existing solutions it has previously analyzed from other online sources. If people tend to get a calculation wrong, it’s entirely possible the AI will too. This category of error goes by the colorful name of hallucinations and it’s a very real problem.

CAN AI SYSTEMS SOLVE MATH WORD PROBLEMS?

To bring this to a more tangible place, let’s compare both ChatGPT and Google Gemini to see if they agree on the answers to fairly tricky math word problems. These are the kinds of word problems you might have in class (to be inevitably met with groans from your students!). Here’s the first one:

“How much of a 70% orange juice drink must be mixed with 44 gallons of a 20% orange juice drink to obtain a mixture that is 50% orange juice?”

Rather than just an answer, however, we would like these tools to explain how they reached their conclusion, and both do an admirable job with the task. First, ChatGPT:

To solve this problem, we can set up an equation based on the amount of pure orange juice in each of the two drinks.

Let x represent the amount of 70% orange juice drink we need to mix.

The pure orange juice in the 70% orange juice drink is 0.70x

The pure orange juice in the 20% orange juice drink is 0.20 x 44 = 8.8

0.20×44=8.8 gallons.When we mix these, we want the resulting mixture to have 50% orange juice. So, the total amount of pure orange juice in the mixture should be half of the total volume of the mixture, which would be 1/2(x + 44).

Now, we can set up the equation:

0.70x + 8.8 = 0.50(x + 44)

Let’s solve for x:

0.70x + 8.8 = 0.50x + 22

0.70x – 0.5x = 22 – 8.8

0.20x = 13.2

x = 13.2 / 0.20

x = 66

So, you would need to mix 66 gallons of the 70% orange juice drink with the 44 gallons of the 20% orange juice drink to obtain a mixture that is 50% orange juice.

Same question asked of Gemini reveals that its answer isn’t quite the same (I’ll skip its “show your work” explanation):

You need to mix approximately 14.7 gallons of the 70% orange juice drink with the 44 gallons of the 20% drink to obtain a final mixture that is 50% orange juice.

Two different answers! ChatGPT says you need to mix 66 gallons and 44 gallons, while Gemini says 14.7 gallons + 44 gallons will work just fine.

Which one’s correct? Are they both correct? Is the answer something else entirely? You can see that you probably won’t be replaced by an AI system any time soon, that’s for sure.

This isn’t to say that these tools can’t help you out. But let’s try one more word problem…

A MORE COMPLEX WORD PROBLEM

Let’s test these with a more complicated problem:

“An airplane flying with a speed of 700 mi/h is detected 1000 miles away. 30 minutes later another plane with a max speed of 800 mi/h takes off to intercept the first plane. How long will it take the interceptor plane to catch up to the other plane?”

I’ll share Google Gemini’s full answer this time so you can see how it also does a nice job of showing its work:

To find how long it takes the interceptor plane to catch up, we need to consider two key points:

1. Distance Traveled by the First Plane:

The first plane is detected 1000 miles away.

30 minutes later translates to 0.5 hours (since 1 hour = 60 minutes).

In that time, the first plane travels at a speed of 700 mi/h, so it covers a distance of 700 mi/h * 0.5 h = 350 miles.2. Relative Speed and Time to Meet:

The interceptor plane travels at a speed of 800 mi/h, while the first plane is going at 700 mi/h.

This means the relative speed between them is 800 mi/h – 700 mi/h = 100 mi/h.

Now, we need to find the time it takes for the interceptor plane to cover the remaining distance between them, which is the initial distance minus the distance traveled by the first plane: 1000 miles – 350 miles = 650 miles.

So, the time it takes for the interceptor plane to catch up is 650 miles / 100 mi/h = 6.5 hours.

Therefore, it will take the interceptor plane 6.5 hours to catch up to the first plane.

According to Gemini, it’s going to take 6 1/2 hours for the planes to rendezvous. ChatGPT, however, has a different answer:

It will take the interceptor plane 3.5 hours to catch up to the other plane.

ChatGPT also does a nice job of showing its work and it all seems logical and reasonable. But, again, they don’t agree! Since you’re a math teacher, I’m sure you’ve figured out the correct answer to both problems in the meantime.

THEY’RE GREAT FOR WORKSHEETS

The bigger issue here is that while these Generative AI tools are helpful and interesting, it remains critical for us humans to check their work, just as you would any student who was turning in their answer to a problem. This also has implications for student work too, of course, because if one of them turns in a homework assignment that is word-for-word the solution from Gemini, say, that’s obviously going to be a problem unless you explicitly gave them permission to lean on these tools.

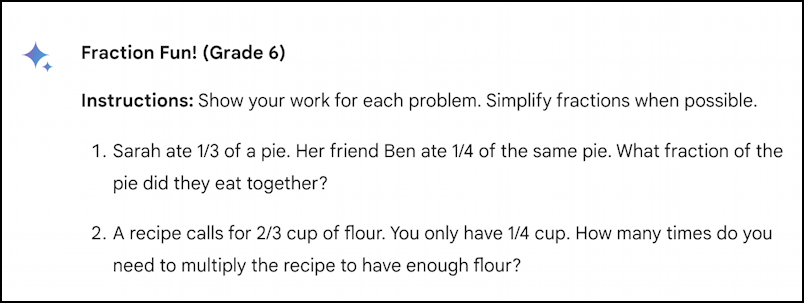

Worksheets, however, are where these tools really shine. For example, for the prompt “generate a 10 question math worksheet focused on fractions and both multiplication and division. aim for a 6th grade math level and have the problems be fairly easy” Gemini produces the following (just the first two questions shown):

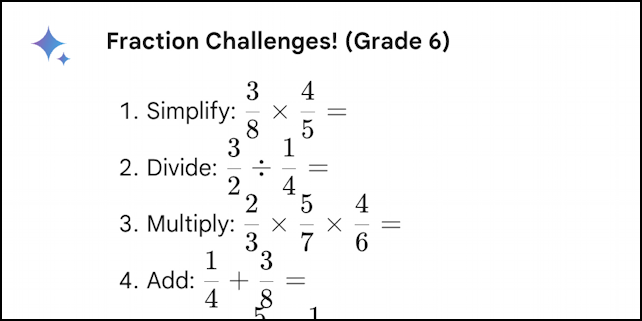

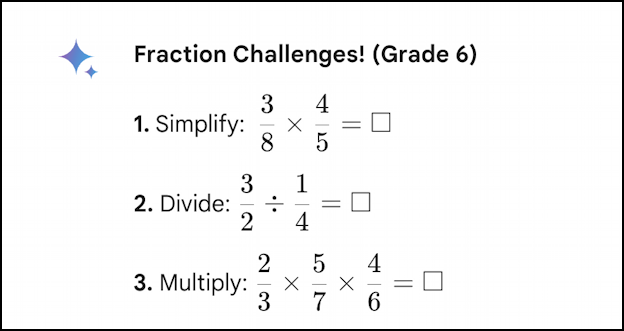

Prefer to just have the equations for your students to solve, rather than more word problems? Simply tell the AI. I responded to the above with “not word problems, just math equations that need to be solved” and it promptly listed:

Still not quite right – the spacing is a bit too tight. Solution? Tell it to space it out more: “space out each question more so it’s more legible” and the results are ready to go:

Looks good and you can further refine things if you want, saying you want bigger fractions, more division, a mix of word problems and equations to solve, etc. You can even ask one of these Generative AI tools to solve the equations, but as we’ve seen, you might want to double-check your answers before they show up on the official answer key!

Nonetheless, there’s no denying that these tools can do a masterful job of supplementing teachers, producing fun and interesting worksheets (try “math word problems that involve baseball” for example), and revolutionizing the labor involved in teaching on a day-to-day basis. Good luck!

Pro Tip: I’ve been writing about AI for a while now. Please check out my AI and ChatGPT Help Area for more tutorials and help articles while you’re visiting!

As a former math teacher, I am intrigued with the mixture problem that was posed to ChatGBT and Gemini. 66 gallons is of course the correct answer, but for the life of me, I can not “reverse engineer” how Gemini cam up with 14.7 gallons, since the work was not shown. Could you please show the work/explanation that Gemini went though?

Ahhh a math teacher asking me to show my work. I will instead invite you to go to gemini.google.com and paste in my prompt to see what it produces!

Thank you Dave!

Bob