Hi Dave, I’m a high school teacher and am curious about all of the AI writing tools available now. Is there a way to identify when text is written by a program rather than a person? If so, how accurate is it today?

While machine learning-based AI tools have been around for a few years, the beginning of 2023 has been all about OpenAI and its ChatGPT tool. And no wonder; you can ask it to produce just about any sort of text content and within a few seconds you’re getting something that’s not bad. It’s not brilliant, but how many song lyrics, poems, blog posts, article comments, or student papers are brilliant?

Then again, teachers aren’t looking for that needle in a haystack, we’re just trying to help people learn something new and expand their horizons and expertise. A task that is made quite a bit more difficult if we give them written tasks and instead of writing them, they turn to software or Web sites that can produce the content instead. A click and copy/paste versus the critical thinking required to produce something thoughtful and on-topic? Unfortunately, there’ll always be a couple of lazy students who will seek shortcuts for whatever reason.

DOES USING AI DIFFER FROM PLAGIARIZING?

At some level, this is no different from plagiarism. Prior to the Internet, plagiarism referred to students copying out of a book or a prior student’s assignment. In the digital age, there are dozens of Web sites that offer “only A papers” on any of thousands of topics, from Shakespeare to Organic Chemistry. The duplicated writing is generally identified by testing multi-word phrases. The plagiarism tests from companies like TurnItIn, for example, are pretty solid in this regard. But AI tools like ChatGPT produce unique content every single time they’re invoked, so how can they be detected?

Turns out that the current measure is perplexity. The technical definition of this measure is “a metric that quantifies how uncertain a model is about the predictions it makes” but that doesn’t really clarify what’s being calculated, does it? Here’s another explanation of perplexity: “if a [language] model assigns a high probability to the test set, it means that it is not surprised to see it (it’s not perplexed by it)…”

For our purposes, though, we can consider perplexity as a common language-based analysis tool to try and ascertain whether a specific prose passage is likely produced by a human or an AI. High perplexity means it’s likely AI-generated, low perplexity means it’s likely written by a human. The good news is that there are already online tools that offer just this analysis. Let’s consider both GPT Zero and GPT Radar.

PRODUCING ACADEMIC PROSE WITH CHATGPT

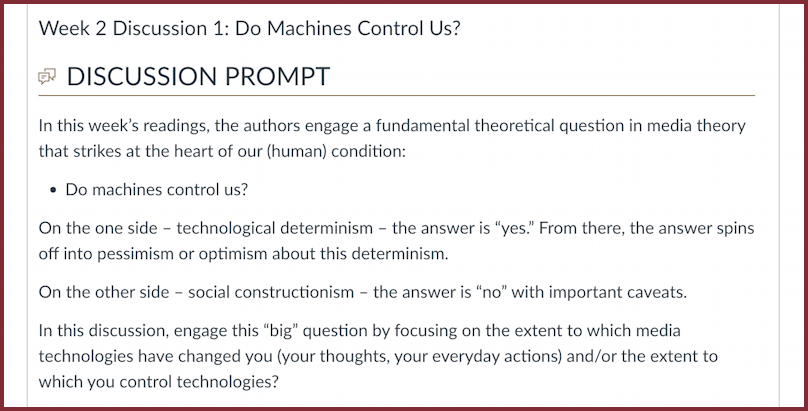

As a benchmark, I’m going to use a discussion prompt from a course I’m teaching at the University of Denver. The class is about media and society and this week’s theme happens to be quite appropriate: Do Machines Control Us?

Here’s the prompt:

As a test, I’m going to simply copy and paste this into ChatGPT, the interactive front-end to the Open AI “GPT3” language model system. You can try this too, at chat.openai.com (though you might need to sign up for a free account first).

Here’s the exact response from GPT3 to the discussion prompt:

It is a complex question and depends on the perspective one takes. From a technological determinism perspective, it could be argued that media technologies have significantly changed the way we think and interact with the world. They have revolutionized the way we communicate, access information, and make decisions. The constant availability of information and the ability to connect with people from all over the globe has reshaped our understanding of time and space.

On the other hand, from a social constructionism perspective, it could be argued that while technology has certainly influenced our behavior, it is ultimately shaped by the social and cultural context in which it is used. It is important to remember that technology is created, used, and interpreted by human beings, and it is human choices that determine how technology is used.

The critical thinking demonstrated here is weak, with a lot of words covering up a lack of actual analysis, but it’s not bad and it’s believable that a student could submit something like this as a response to the discussion prompt.

The question isn’t so much about whether a close read and consideration can detect this AI-produced content, however, but whether there are tools that can do so. You can certainly imagine if this is one response from a class with 50 students, the teacher’s ability to slow down and really contemplate the prose is going to be very limited.

FIRST TEST: GPT ZERO

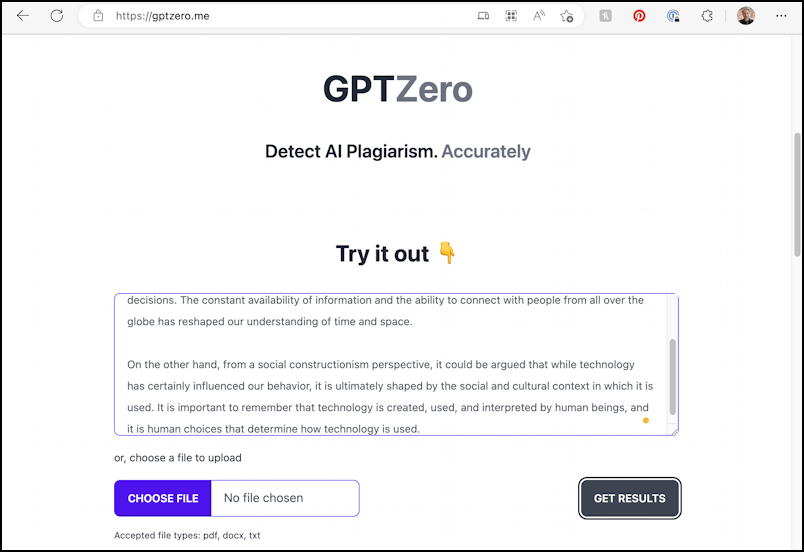

The first tool to consider is one initially created in a weekend by Edward Tian, a computer science undergrad at Princeton University: GPTZero. It is based on the perplexity measure of language analysis, as discussed earlier. The test is easy to perform, a simple paste from ChatGPT:

You can upload files to analyze too – particularly helpful for longer class assignments – but it’s straightforward to copy and paste the modest 136-word passage.

A click on “Get Results” and the verdict is delivered:

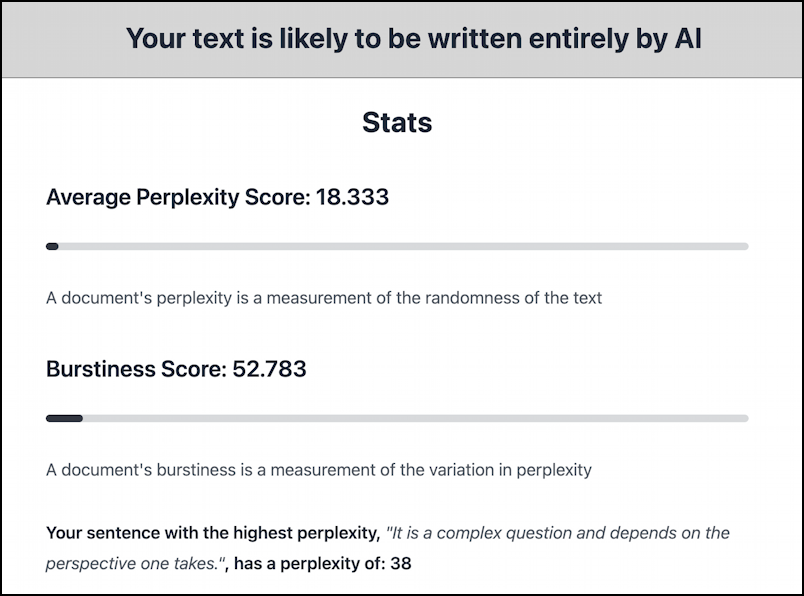

Okay, “Your text is likely to be written entirely by AI”. Case closed? Not so fast.

SECOND TEST: GPT RADAR

Before we conclude the AI prose is easily identified, let’s try another tool that’s been around a bit longer: GPT Radar. It’s a tool that content production teams utilize when delivering blog posts and other sponsored content for clients, but it’s illustrative for our purposes too.

Since perplexity is a mathematical analysis of text, the result should be the same, right? A click on “Analyze” shows otherwise:

GPTZero reports a perplexity score of 18.33, while GPT Radar produces a 6.0. The lower the score, the less “surprised” the algorithm is about word choice in the passage and the more likely it’s written by a human (since we all tend to write in rather similar ways), but as is obvious, it’s not entirely deterministic.

ANALYSIS RESULTS: YES, AND NO

The results demonstrate the complexity of the problem; one tool reports that our stilted, awkwardly written prose is almost certainly written by an AI program, while the other tool insists it’s “likely human generated”. The obvious conclusion is that online tools aren’t quite ready to accurately identify AI produced text. This is concerning for both us as educators and all of us as citizens and consumers of information.

Perhaps more importantly, neither tool offers any analysis of whether the response actually answers the prompts and offers up an intelligent commentary and response. That’s the job of us instructors, and it’s a tough task. With a small class, the teacher can track writing across assignments (if a student has an intro written at a 7th-grade level, but their assignments are grad school level work, that’s an obvious and immediate red flag). But what if you do have dozens or hundreds of students?

There is no easy solution today. The best advice I can offer is to understand the limitations of these tools and realize that even as they seek to be more accurate, the AI language models will become more sophisticated, causing a technological cat-and-mouse game. Challenge students whose prose seems improbable or surprising.

The real conclusion, however, is that we’re going to have to change our approach to teaching so that in-person, non-technologically-assisted recitation becomes a part of student evaluation and assessment at any grade level.

Have thoughts and ideas on the subject? Please let me know in the comments!